The Role of Feature Flags in A/B Testing

Most companies believe they understand the customer, only to be shocked when their customers behave differently than what they expected, either intentionally or unintentionally. That's where A/B testing comes in to kick all these doubts and prevent the shock.

We’ll play around to see how A/B testing works with ConfigCat’s feature flag management service to take your experiments to the next level by giving you the ability to remotely control and configure your features without going back to the code.

So, What is A/B Testing?

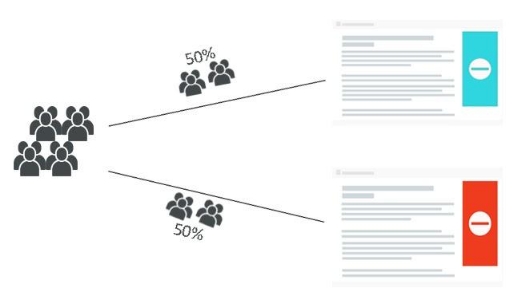

A/B Testing is a statistical technique used to analyze differences between two or more versions, for instance version A and version B (both "A" and "B" are given one-half of users).

Illustrative Example

Half of your users will see the poster in aqua, while the other half will see it in red. At the end of the test, you’ll be able to determine which color the users preferred. Awesome, right? That’s how businesses work these days; adopting a data-driven approach has shown incredible results.

Google, Twitter, Netflix, and many other companies run thousands of A/B tests. One of the most popular A/B Testing stories was when a Google team couldn't decide between two blue shades on their links’ color, so they tried 41 shades of blue, which eventually ended up making them an extra $200 million in revenue per year.

The nice thing about A/B testing is that your visitors will have no idea that they’re part of a test, which is important because this provides unbiased results that help you determine what works best.

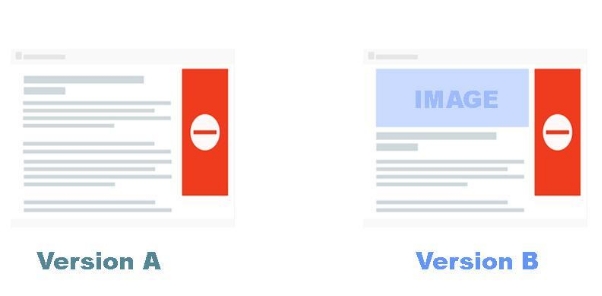

In terms of a real-world scenario, say you have a content page and want to add an image with the intent to improve your visitors' experience.

Once you run a test, you will determine which had the better matrices, and the winning version will become your new default page.

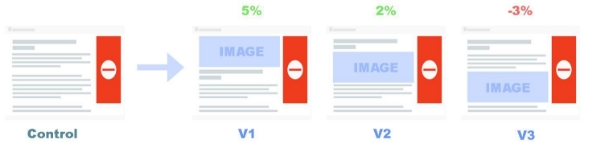

You can also test out different variations if you are uncertain about a few changes, or you think you can still improve.

So here we go, testing three versions, moving people from one experience to another. Some are good and some are bad. As a result of A/B testing, you remove the guessing game and your questions get answered with data.

A/B Testing and Feature Flags

A/B Testing was traditionally known for making cosmetic changes to your website, like changing a layout, button, or even font size. But by using feature flags (also known as feature toggles), you can more easily test functional changes. Nowadays, A/B testing goes hand in hand with feature flags.

ConfigCat provides feature flag management and as a benefit of the service, you will have complete control over your features and versions, rolling out a particular version to a growing segment of your audience, and even the ability to scale it back.

Additionally, you can use feature flags to parameterize your code to test different configurations.

Why should you do A/B Testing?

If you ever wondered what to do to increase the conversion rate for your website, then A/B testing is the answer. This will enable you to better understand your product and audience by answering most of your business questions.

Everything will be presented to you as data; you will rely on statistics and data instead of intuition. These metrics will help you analyze and quantify the changes you make to your product. There are no guesses, no opinions, just data that help you make informed decisions.

Also, it's worth noting that tests can help reduce risks.

However, even if your test results were disappointing, any outcome can be viewed as a learning curve that can be used to understand your audience better, which will help you with the upcoming changes.

Where and What to A/B Test?

Testing can be done almost anywhere on your site. On your home page, content page, signup form, or your ads.

And there are a lot of use cases as well. You can test the pages on your site, both visually and functionally. It's also beneficial to test the pricing when selling products. Aside from that, you can test your business model, for example, by offering a 14-day trial instead of a 7-day trial.

How To Get Started?

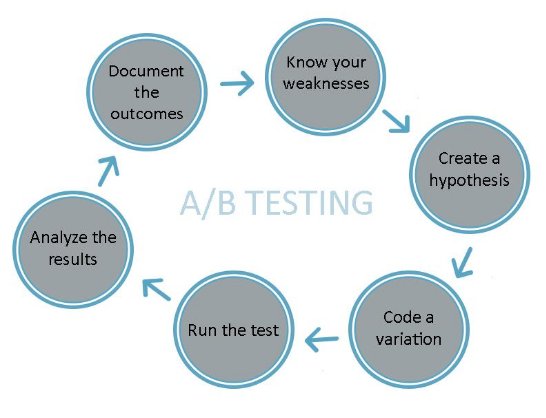

Step 1: Know your weaknesses

Your first step is to know your weaknesses by analyzing your website. You can use analytics tools (Amplitude, Mixpanel, or similar) to see where your website pages are underperforming.

Ex: having inappropriate scrolling depths or low conversion rates. Also, you can use click heatmaps to analyze how the visitors interact with your site.

Step 2: Create a hypothesis

The second stage is to create your hypothesis; once you know what is going wrong in your site, you must clarify how and what you need to change to make it better.

Set your goals and define what success means to you in this case; this will help you understand the purpose of the experiment.

Note: Trying out random ideas can be pretty costly. You might waste valuable time and traffic. This is not recommended.

Step 3: Code a variation

Now it’s time to create a variation based on what you’d like to improve. When setting the variations, always ask yourself what you can learn if this variation wins or loses, so no matter if your final results were positive or negative, you'll end up learning about your site through the experiment. Afterward, you will need to code the variation under a ConfigCat feature flag, so you can fully control it.

To set up your variation, you'll need to sign up for a free account on ConfigCat and then simply follow the instructions below.

Step 4: Run the test

This is the step where you run your test using your favorite analytics tool. Make sure that the measurements are in place, so your test results will be clear and easy to interpret.

Things to watch out for:

- Minimum sample size. To ensure that your test results can be trusted, you should determine how many visitors you need.

For example, suppose 6% of your visitors do what you wanted them to do, like purchasing something or submitting a form. And you wanted to increase your 6% conversion rate by 30%. If you plug these numbers into the calculator, it will tell you that you need 2840 visitors for each variant.

You can also enter the number of daily visitors using the calculator to determine the best duration for the test. For example, if your website averages 800 daily visitors, and you have two variations, the test should last for 75 days to get effective results. In the meantime, you can shorten it as much as you like or terminate it if you detect something.

-

External factors. When planning for an upcoming test, you should consider external factors such as the season, holidays, weather, and other situations. Testing your sale ads on Black Friday, for example, wouldn't be a good idea.

-

Consider the type of device. When you run the test on all the traffic flowing through your multi-platform software, such as desktop and mobile. Then make sure to check how the test looks on each platform separately.

Once you have considered these factors, it is time to launch your test.

Step 5: Analyze the results

In our final phase, we will monitor the performance, analyze the results, and evaluate how each variation performed compared to the other. That doesn’t happen inside your ConfigCat manager; you usually need an analytics dashboard that not only shows numbers but also explains where they are coming from.

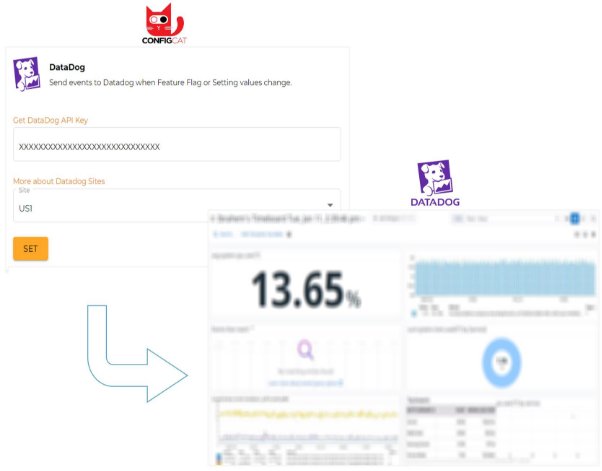

DataDog can be used for this. There's an integration with ConfigCat that makes it easy to use. Just create a DataDog account and insert your DataDog API key into the ConfigCat Integration page's DataDog field, and voila, all the events with your flag will be sent to your analytic tool.

Let's say, however, if your actual conversion rate is 3% and after testing your changes, you got a result of a 4% conversion rate. You have increased the conversion rate a little. Now it’s your decision if you would like to apply these changes to your site or to try another hypothesis.

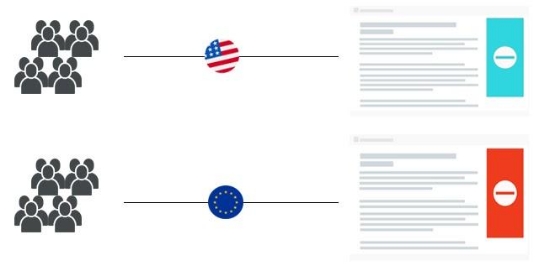

You may also want to consider targeting your variations to a specific kind of audience. For example, to your most valuable customers or to find out what visitors from the United States or Europe think about these changes. ConfigCat solves that issue.

Additionally, another factor to consider is the statistical significance. In short, It gives you an idea of whether your results are caused by what you have done or by randomness. The ideal and practical significance level (also called confidence) is usually 95%, and the remaining 5% represents the probability of errors, known as the P-value. In other words, if you repeated the test 100 times, you would see the same result 95 times out of 100.

Step 6: Document the outcomes

Last but not least, don’t forget to document your results and highlight the positives and negatives. After all, we are learning and testing. These documents will become the basis for holding quick and productive brainstorming sessions with your team.

Once you've completed all the steps, repeat it. Repetition is the key for A/B testing. The more you do it, the better you get; it's not magic.

Who should use A/B Testing?

- Designers. The most popular use of A/B Testing is to test out new design ideas because even a small change in text size or color can alter the entire user experience.

- Product Managers. As decision-makers, you must know how the decisions you make impact your customers, especially crucial decisions; otherwise, you won't see the effectiveness of your changes quickly.

- Marketers. They use A/B testing to test images, videos, or product descriptions to decide what, where, and how to advertise.

- Data Analyst. Even if the test itself is straightforward, the data you obtain might not be. The data analysts can dig deep and use this information to understand a more holistic approach for serving customers and to answer every “why?”.

Conclusion

A/B Testing is invaluable to any digital decision-maker in an online environment and crucial for improving conversion rates.

Make sure you check out the ConfigCat Blog for a wide variety of content. You can also find ConfigCat on Facebook, Twitter, and LinkedIn.