Using Amplitude in a VueJS A/B Testing Scenario

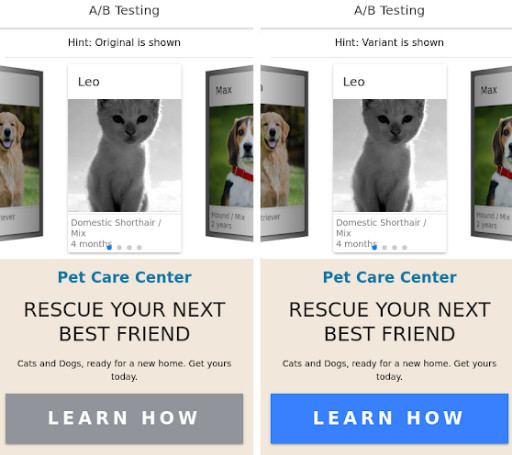

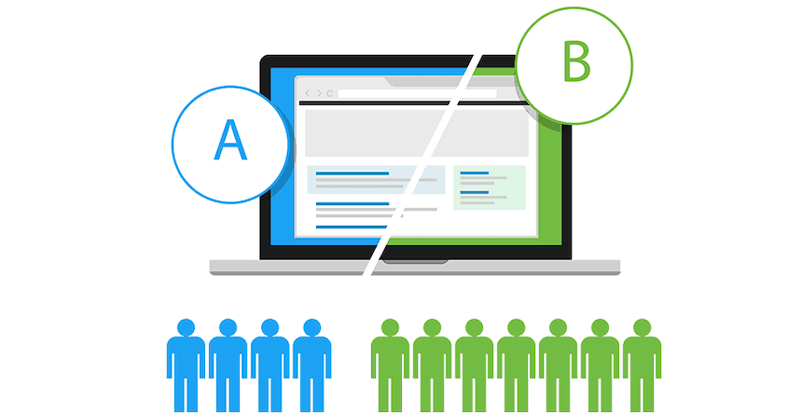

When it comes to releasing new features or changes in software, we can rely on A/B testing for making informed decisions. In this type of testing, we can measure the impact of the new change or feature on users before deciding to deploy it. By doing so, we can carefully roll out updates without negatively impacting user experience.