A/B Testing React Native Apps with Feature Flags

Suppose you have two variations of a software product, but you're not sure which one to deploy. That's the problem A/B testing solves. And in mobile development, running a clean A/B test is harder than it sounds because you can't just toggle a server-side flag and move on. You're dealing with bundle updates, app store review times, and users who haven't updated in months.

This guide shows how to run a proper A/B test in a React Native app using ConfigCat feature flags for the rollout and Amplitude for analytics. By the end, you'll have a working implementation, a real results-reading framework, and a clear picture of what to do when the test is over.

What is an A/B test

A/B testing is an experimentation method in which two app variations, commonly called A and B, are released to different groups of users to determine which is better to release. This approach reduces the risk of introducing bugs when the feature is permanently released, since only a subset of users can see and use it while feedback is collected.

Typically, Variation A is used as a control or benchmark to compare Variation B to. Coming up, I'll show you how to set up an A/B test experiment in a demo React Native app to decide if a new feature (Variation B) should be rolled out.

The Demo A/B Test Experiment

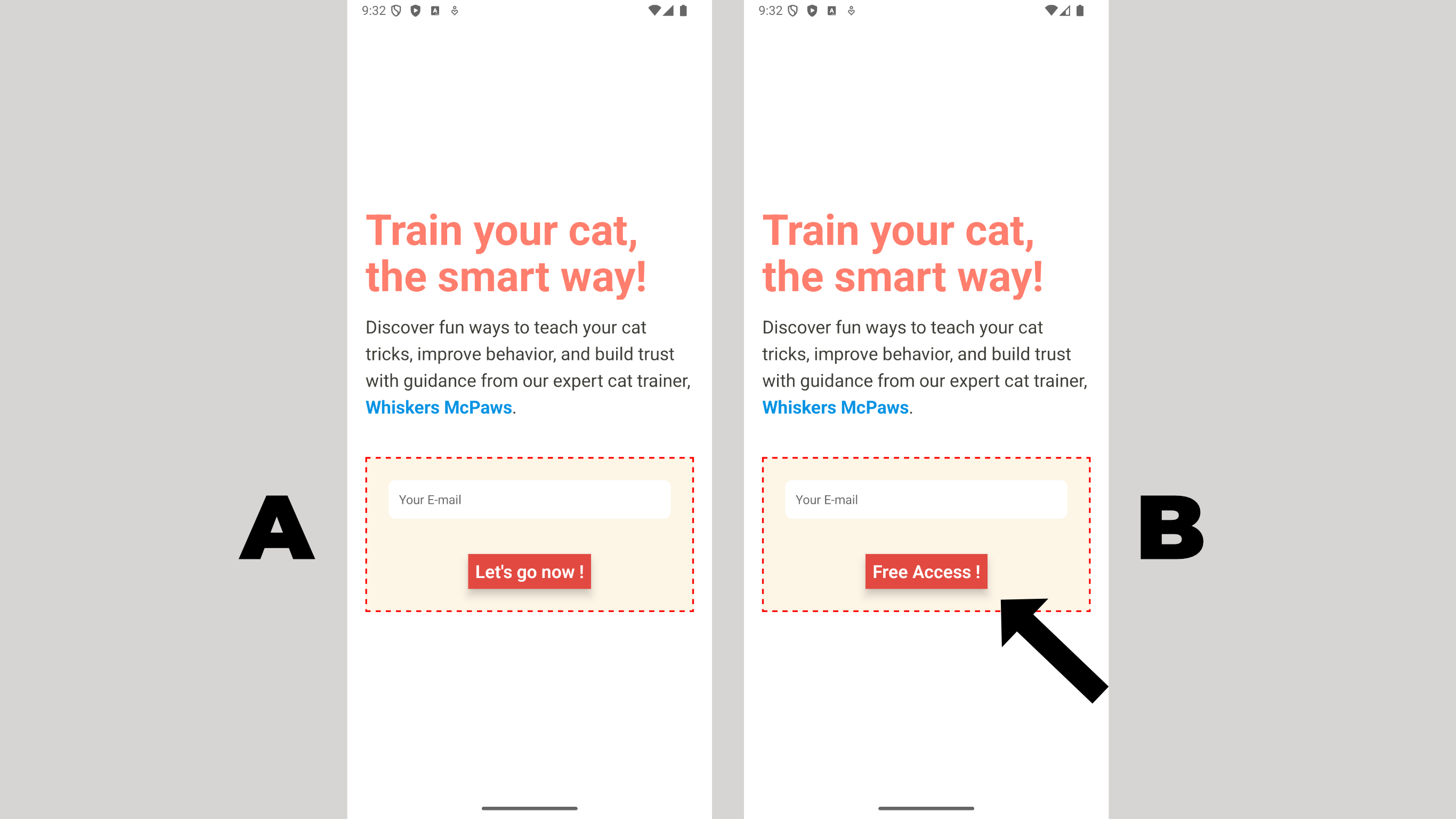

Consider the following scenario: On average, variation A accounts for about 400 user sign-ups per month among 10% of users. After some market research, a team decided to run an experiment to determine whether the sign-up button text was the cause.

Will changing the button text (as done in variation B below) influence more user sign-ups?

To move forward with the experiment, I'll need a way to roll out Variation B to 10% of users. We'll use ConfigCat to split traffic (10% of users see Variation B, 90% see Variation A) and Amplitude to track the sign-up events for both groups. At the end, we compare them and make a data-backed decision.

This is the same pattern used in production by teams doing experimentation and growth hacking with feature flags. The implementation scales from a button text test to a full onboarding flow experiment.

Why Feature Flags for A/B Testing in React Native

The standard mobile A/B testing approach involves shipping separate builds, using a remote config service, or waiting for app store review cycles for each variant. None of these is fast. None of them gives you a kill switch.

A feature flag framework gives you a better model. You ship one build with both variations already in the code. A flag determines which variant each user sees at runtime. You can start, pause, and end the test from a dashboard without touching your codebase.

This also means you can roll back immediately if Variation B causes problems. Flip the flag off, and every user reverts to Variation A within seconds.

Prerequisites

Before starting, you'll need:

- A React Native project

- A free ConfigCat account

- A free Amplitude account

- Node.js 16+ and a working React Native development environment

Install the dependencies you'll need upfront:

npm install configcat-react @amplitude/analytics-react-native @react-native-async-storage/async-storage

On the ConfigCat package: configcat-react is the right choice for React Native projects. It provides the ConfigCatProvider wrapper and the useFeatureFlag hook, the same API used in React web apps, fully compatible with React Native. Under the hood, it uses ConfigCat's unified JavaScript SDK, which supports Browser, Node.js, React Native, and more from a single package.

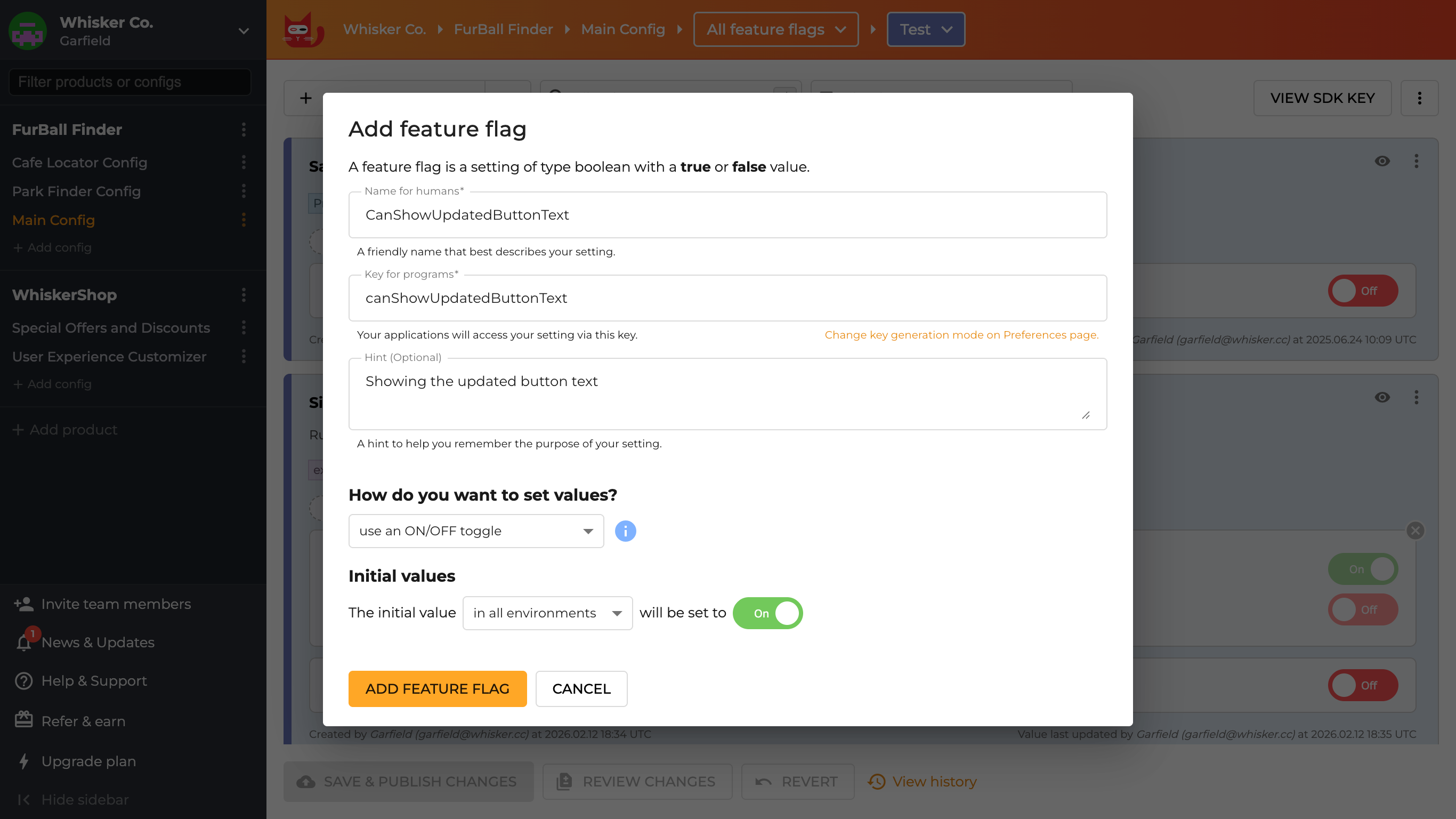

Step 1: Create a Feature Flag in ConfigCat

The feature flag is the control mechanism. It decides which variation each user sees, consistently, based on a percentage split. Log in to the ConfigCat dashboard and create a new feature flag with the following details:

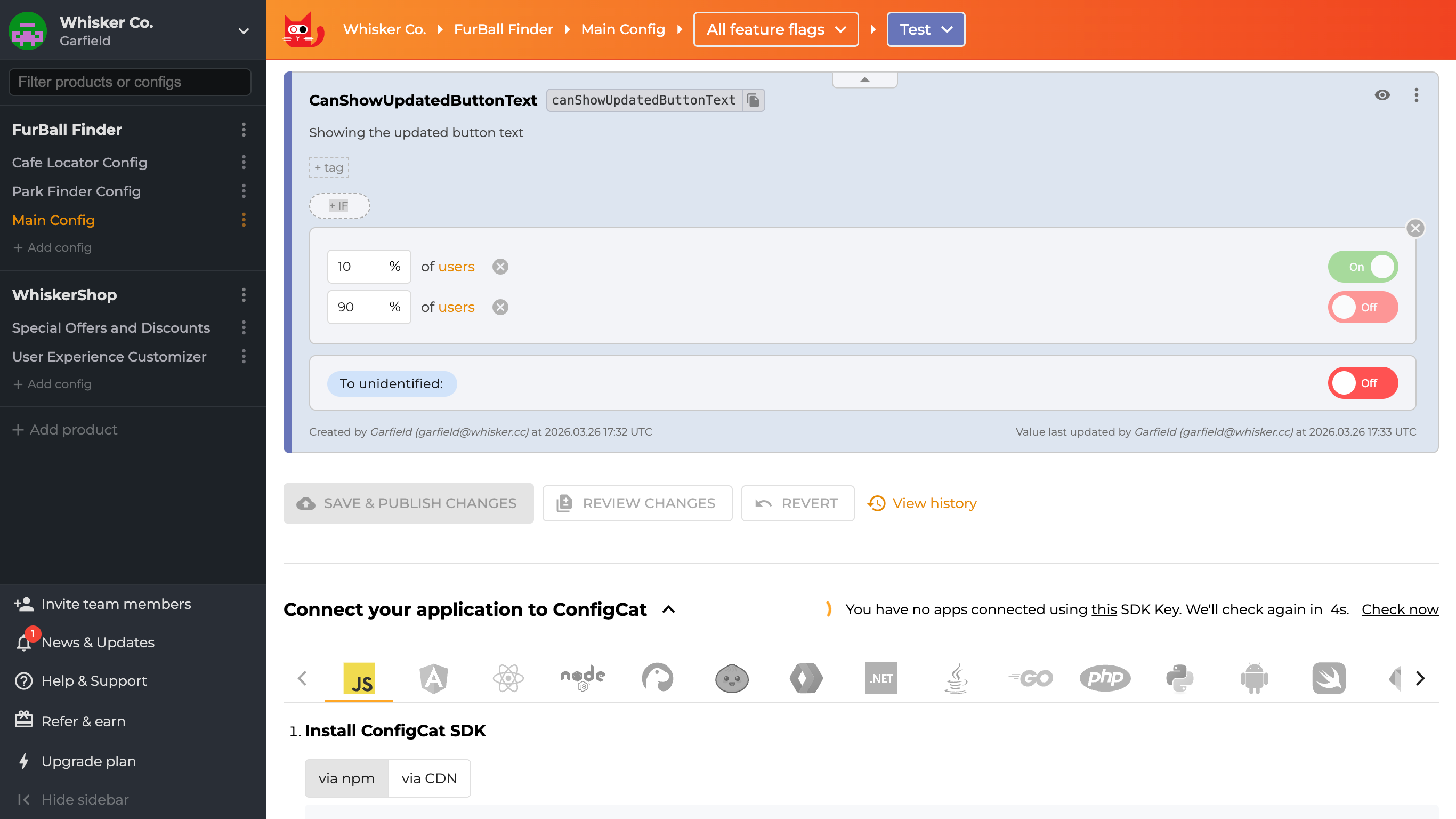

Once the flag is created, target 10% of the user base:

The 10% figure is intentional. You're not trying to find a winner quickly; you're trying to minimize risk while gathering a real signal. If Variation B causes unexpected problems, only 10% of users are affected, and you can turn it off immediately.

ConfigCat assigns users to the 10% group consistently, meaning that the same user always sees the same variation on every session. This consistency is essential. If the same user saw different variations across visits, you couldn't attribute a conversion to either group reliably.

Step 2: Integrate ConfigCat into React Native

Before wiring up the A/B test logic, you'll need ConfigCat running in your React Native app. If you haven't done that yet, the guide Using Feature Flags in React Native walks through the full setup.

Step 3: Connect ConfigCat to Amplitude

To track every user sign-up event when the button is pressed, you'll use Amplitude's analytics platform. If you don't have an account yet, sign up for a free user account.

Once you're in, set up a data source so Amplitude knows where events are coming from:

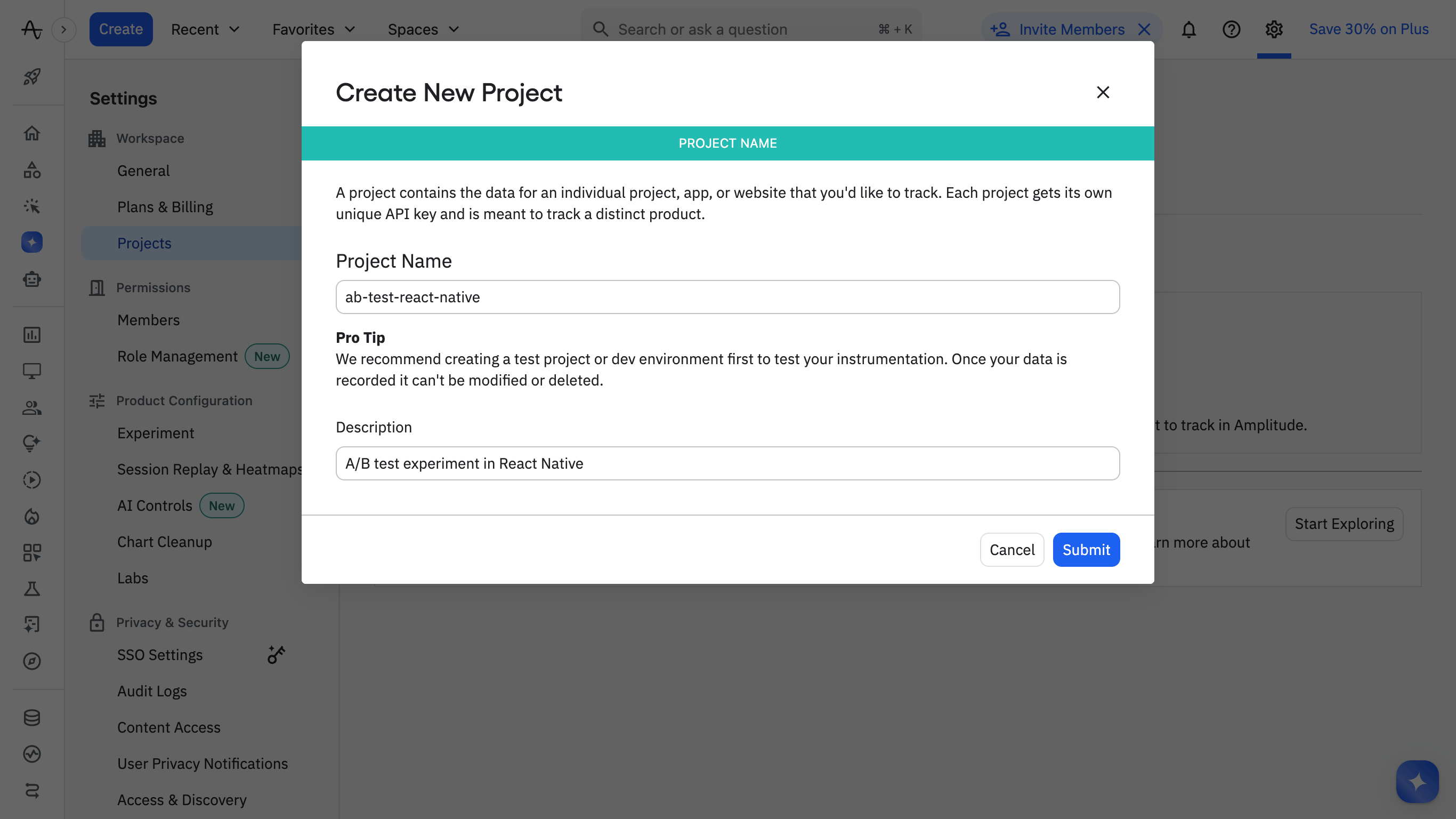

1. Create a new project in Amplitude for the test experiment:

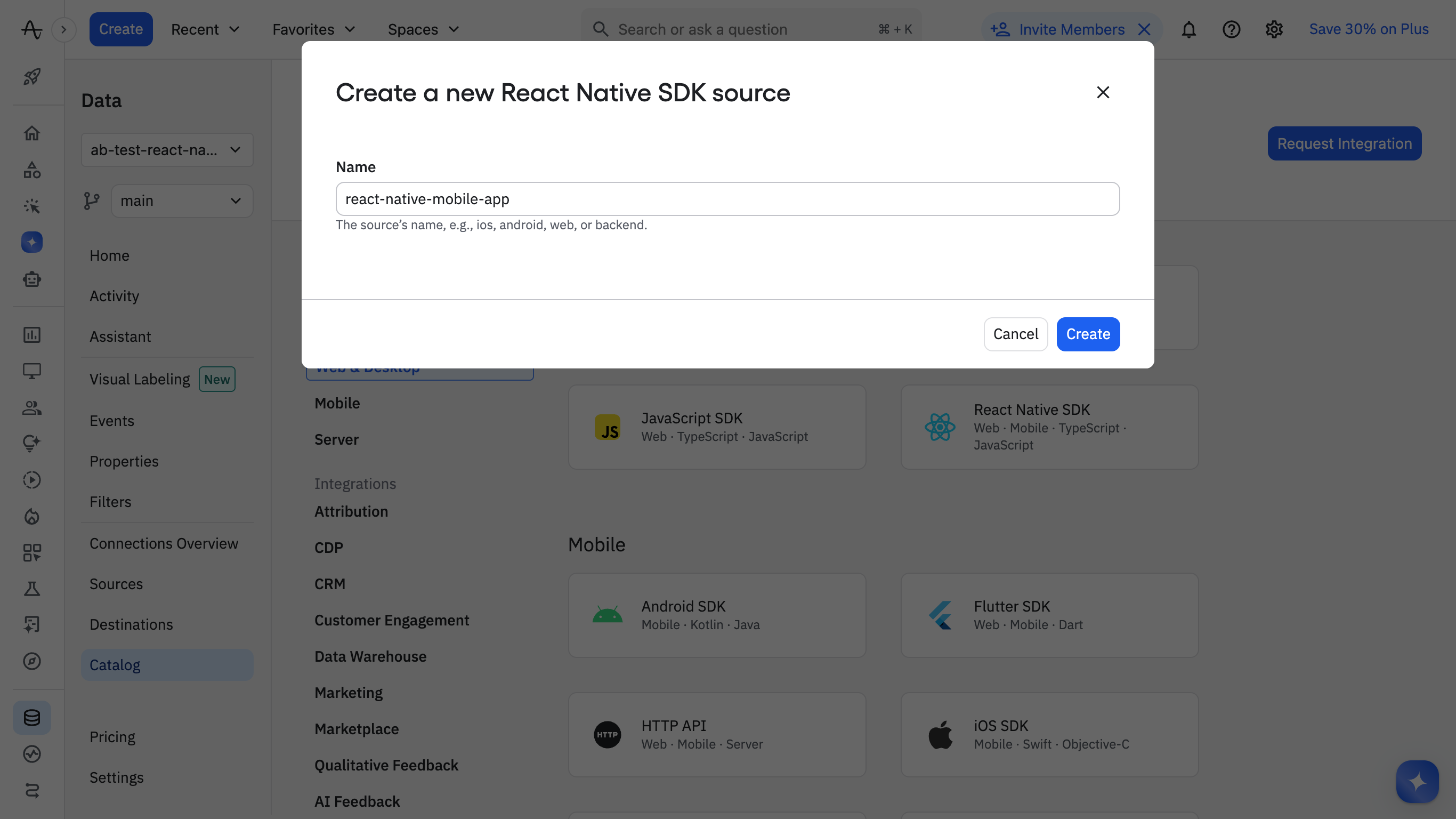

2. Add the Amplitude React Native SDK as a source for the project:

3. Create a reusable service for Amplitude which you can import and use throughout your app.

Step 4: Add a sign-up event

Next, define the event you'll use as your primary conversion metric:

1. Click the Events link in the left sidebar.

2. The feature flag controls which sign-up button text is shown, while Amplitude tracks which variant users click. In this example, the button text acts as the variant identifier. In a production setup, you may want to track an explicit variant property (e.g., "A" or "B") to make analysis clearer and more reliable.

import { useFeatureFlag } from "configcat-react";

import { Text, StyleSheet, Pressable } from "react-native";

import amplitude from "@/services/amplitude-service";

export function SignupButton() {

const { value: isCanShowUpdatedButtonTextEnabled } = useFeatureFlag(

"canShowUpdatedButtonText",

false,

);

const handleSignupButtonClick = (buttonText: string) => {

// Track a button click with optional properties

const eventProperties = { buttonText: buttonText };

amplitude.track("SignupButton Clicked", eventProperties);

};

const buttonText = isCanShowUpdatedButtonTextEnabled

? "Free Access !"

: "Let's go now !";

return (

<Pressable

style={styles.signupButton}

onPress={() => handleSignupButtonClick(buttonText)}

>

<Text style={styles.signupButtonText}>{buttonText}</Text>

</Pressable>

);

}

3. Return to the app and click the signup button a few times to see the event recorded on the Events page.

4. Click the name of the event, then click the Add to plan button to add the event to your tracking plan.

Step 5: Set Up the Analysis Chart in Amplitude

To analyze the events and get real-time visual insights into variation B, I'll set up an Analysis chart.

1. Back on the Events page, select the event, then click the Create Chart button at the top right. This will create a new chart for analyzing the test results.

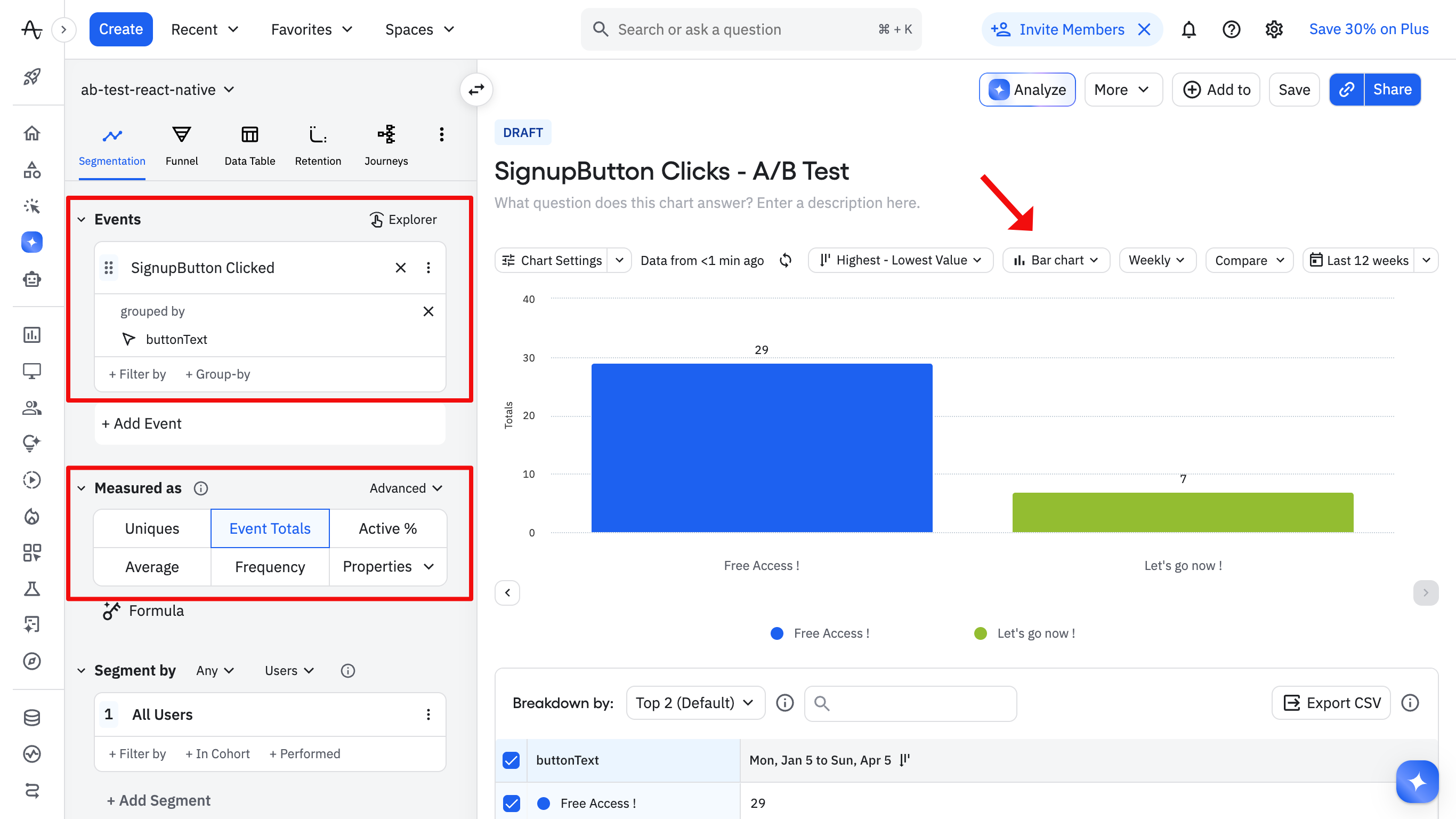

2. Add the SignupButton Clicked event to the Chart, set grouped by to buttonText, then select Bar chart:

Check the chart daily while the test is running. You're not looking for a winner yet. You're watching for problems. A sudden drop in Variation B sign-ups could mean a UI bug or a confusing change that made things worse. Because you are using ConfigCat, you can turn the flag off immediately, and every user returns to Variation A without a redeployment.

Step 6: Reading the Results

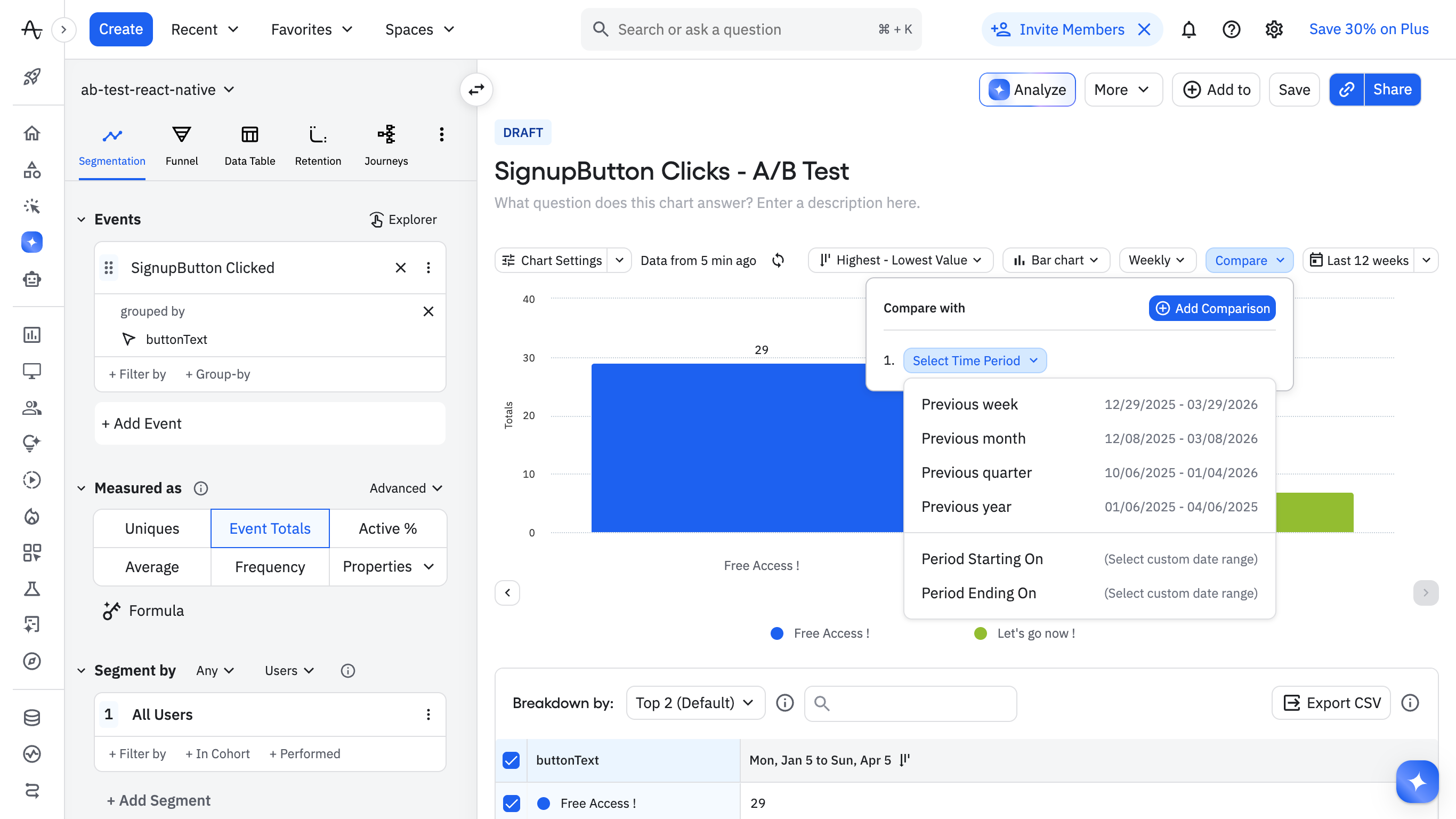

When your testing period is over, you'll need to compare the results you gathered with those from a past period. Amplitude provides an easy way to do this with the Compare dropdown button as shown below. This can help you make an informed decision about which variation to roll out or keep.

You can find the final app here.

Step 7: Ending the Test

Once you have a clear result, close the experiment cleanly. A flag that is never removed becomes a zombie flag, lingering in your codebase long after its purpose is gone and adding noise to every future debugging session.

If Variation B wins:

- In the ConfigCat dashboard, change the rule to serve

truefor 100% of users - Verify the full rollout for 24 to 48 hours before touching the code

- Deploy a code update that removes the flag and hardcodes the winning button text

- Delete the flag from the ConfigCat dashboard

- Archive the Amplitude chart for future reference

If Variation A wins or the results are inconclusive:

- Set the flag to

falsefor 100% of users in the dashboard - Deploy a code update that removes the flag while keeping the original button text

- Delete the flag from the ConfigCat dashboard

Either way, the flag gets deleted. Understanding feature flag lifespans and treating cleanup as part of the feature work rather than an afterthought is what keeps a growing flag system manageable.

Step 8 (bonus): Track Feature Flag Exposure in Amplitude

ConfigCat exposes a flagEvaluated hook that fires every time a flag is evaluated for a user. You subscribe to it once at initialization, and it automatically sends an $exposure event to Amplitude Experiments for every evaluation. You don't need to manually track the variation on every button press or screen render; the hook handles it.

The $exposure event populates Amplitude Experiments automatically. The Identify call enriches every subsequent event for that user with the flag variant as a user property, so you can filter any existing chart by which variation they saw. Full integration details are in the ConfigCat Amplitude documentation.

Summary

A/B testing in React Native does not require a separate testing platform or app store submission for each variant. A feature flag framework gives you a cleaner model: ship one build, control the split from a dashboard, let the flagEvaluated hook handle exposure tracking automatically, and make decisions based on real user behavior.

The test in this guide was a button text change. The same code handles pricing page variants, onboarding flow experiments, checkout redesigns, and any other change where you have a clear hypothesis and a measurable outcome.

ConfigCat's Forever Free plan covers everything shown in this guide with no credit card required. It integrates in under 10 minutes, and ConfigCat's React SDK works in React Native out of the box. Your first A/B test can be live before your next standup.

To keep up with other posts like this and announcements, follow ConfigCat on X, Facebook, LinkedIn, and GitHub.